Measuring Architecture Complexity from Whiteboards

by Guillermo Quiros

by Guillermo Quiros

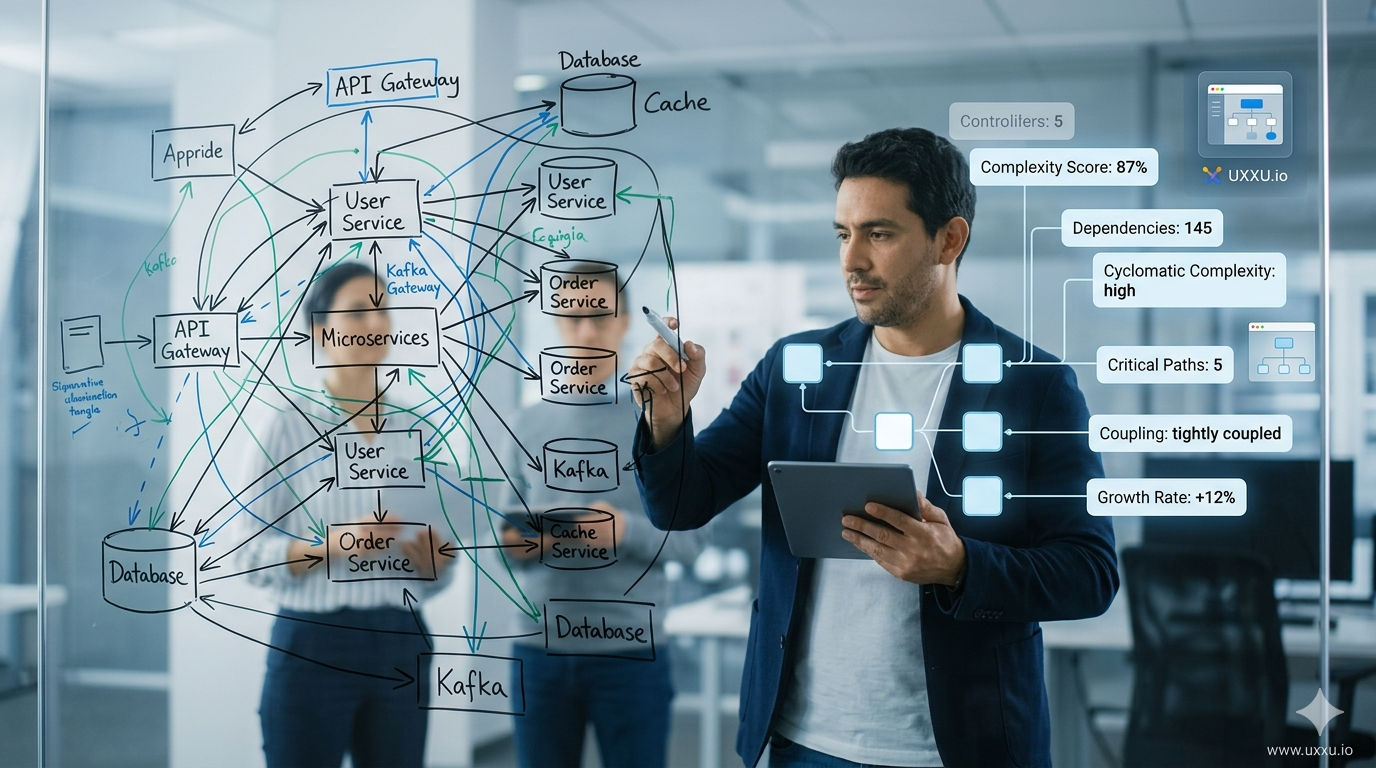

Whiteboard diagrams look simple because complexity is invisible on a board. A clean sketch feels clear during a meeting but fails to capture how many systems exist, how they depend on each other, or how quickly the architecture is growing. Without structure, complexity accumulates silently — until slow deployments, difficult debugging, and expensive refactoring make the problem undeniable.

This article explains why whiteboard diagrams cannot measure architecture complexity, what metrics teams should track, and how structured architecture modeling makes complexity visible and actionable.

Key Takeaways

- Whiteboard diagrams rely on visual intuition, not measurable data — a clean board does not mean a simple system

- Architecture complexity should be measured using: architecture size, diagram density, dependency concentration, technology lifecycle, and architectural activity

- Structured architecture modeling treats architecture as data, not drawings, enabling real analysis

- Analytics can surface structural risks early: tightly coupled systems, overloaded components, single points of failure

- Teams that measure complexity can make architectural decisions based on evidence rather than guesswork

A diagram that looks simple on a whiteboard does not mean the system behind it is simple.

The Illusion of Simplicity

In many engineering teams, architecture discussions happen on whiteboards.

During meetings, engineers quickly sketch:

- systems

- services

- databases

- APIs

- arrows showing interactions

At the moment, everything feels clear.

The team understands the context of the discussion, so the diagram makes sense.

But this clarity is temporary.

Once the meeting ends, the diagram becomes difficult to interpret.

Arrows overlap.

Notes appear in corners.

Dependencies are implied rather than explained.

What seemed clear during the conversation becomes ambiguous afterward.

Why Complexity Is Hard to Measure

Whiteboard diagrams create a major problem:

they cannot be measured.

Teams often rely on visual intuition to judge complexity.

Typical signals include:

- “The board looks crowded.”

- “There are too many arrows.”

- “This system seems complicated.”

But visual impressions are unreliable.

A clean-looking diagram might hide deep complexity.

Meanwhile, a messy diagram might represent a system that is actually well-structured.

Without structure, architecture diagrams become subjective artifacts rather than measurable models.

The Hidden Growth of Complexity

Another problem with whiteboard architecture is lack of history.

Most teams cannot easily answer questions like:

- How many systems existed six months ago?

- How many services depend on this component?

- Which systems are becoming central points of failure?

- How quickly is the architecture growing?

Whiteboards cannot track trends.

So complexity grows quietly.

Dependencies accumulate.

Systems become tightly coupled.

Responsibilities blur between services.

Eventually the architecture becomes fragile, and teams feel the consequences through:

- slower development

- harder deployments

- difficult debugging

- expensive refactoring

But by the time the problem becomes visible, the system is already deeply complex.

Moving From Drawings to Models

The solution is not simply better diagrams.

The real shift comes from treating architecture as a structured model instead of a drawing.

Instead of asking:

“How does the diagram look?”

Teams begin asking:

“What does the architecture actually contain?”

This includes understanding:

- how many systems exist

- how they interact

- where dependencies concentrate

- how the system evolves over time

Resources on Uxxu explain how structured architecture modeling makes complexity visible rather than overwhelming.

By breaking systems into clear levels, teams can analyze architecture instead of merely sketching it.

Making Complexity Visible

When architecture is modeled structurally, teams gain something whiteboards cannot provide:

architecture insights.

Instead of guessing, teams can observe measurable indicators of complexity.

Using structured modeling tools like Uxxu, these insights become visible across the entire system.

Architecture Insights

Architecture Size

Understanding the scale of a system is the first step toward understanding complexity.

Architecture size includes elements such as:

- systems

- applications

- containers

- stores

- actors

- groups and services

Tracking these elements reveals how large a system actually is.

A growing number of components may indicate:

- architectural expansion

- domain growth

- increasing operational overhead

By monitoring architecture size, teams can detect when a system is becoming difficult to manage.

Diagram Metrics

Another important indicator is diagram structure.

Key metrics include:

- total number of diagrams

- diagram density

- elements per diagram

Diagram density reveals how much information is packed into a single view.

High density may indicate:

- overloaded diagrams

- unclear abstraction levels

- excessive coupling

Monitoring these metrics helps teams maintain clear architectural boundaries.

Dependencies and Usage

Dependencies often reveal the true structure of a system.

In complex architectures, some components become heavily reused.

These components may serve as:

- shared services

- integration layers

- gateways

- data hubs

While reuse can be beneficial, concentrated dependencies may also indicate risk.

Architecture analytics can reveal:

- which systems depend on others

- which components are heavily used

- where coupling is increasing

These insights help teams identify potential architectural bottlenecks.

Technology Lifecycle

Architecture complexity is not only structural — it is also technological.

Over time, systems accumulate technologies that move through different lifecycle stages:

- Production – actively used and stable

- Future – planned or experimental

- Deprecated – scheduled for replacement

- Removed – no longer supported

Tracking technology lifecycle helps teams manage:

- technical debt

- modernization strategies

- technology adoption

Without visibility into technology lifecycle, systems gradually accumulate outdated tools that increase operational complexity.

Architecture Activity

Architecture evolves continuously.

Tracking architectural activity reveals how systems grow over time.

Examples include:

- new systems created

- new containers introduced

- components added or removed

Observing these changes helps teams answer questions such as:

- Which parts of the architecture evolve most rapidly?

- Which domains are expanding?

- Are new dependencies being introduced?

Architecture activity provides a temporal dimension to architecture understanding.

Structural Risk Indicators

Some architectural patterns signal potential risks.

These may include:

- highly coupled systems

- dependency concentration

- overloaded components

- possible single points of failure

Without analytics, these risks often remain invisible until a failure occurs.

Architecture analysis tools can highlight these patterns early, allowing teams to address them before they cause major problems.

Why Architecture Analytics Matter

Traditional diagram tools focus on visualization.

They help teams draw diagrams.

But drawing is only part of the challenge.

Understanding architecture requires analysis.

UxxU goes further than visualization by treating architecture as a system that can be analyzed.

Instead of simply displaying boxes and arrows, it helps teams:

- understand architectural scale

- detect structural risks

- monitor system evolution

- identify fragile dependencies

This transforms architecture diagrams from static documentation into living insights about system structure.

From Guessing to Understanding

Before structured architecture modeling, teams often relied on intuition.

If a whiteboard looked crowded, they assumed the system was complex.

If it looked clean, they assumed everything was fine.

This approach is unreliable.

By modeling architecture structurally and analyzing it with architecture insights, teams can move from guessing to understanding.

Complexity becomes measurable.

Risks become visible.

Architecture becomes something teams can reason about, not just draw.

Conclusion

Whiteboard diagrams are useful for brainstorming.

But they cannot measure architecture complexity.

Without structure, teams cannot:

- track growth

- monitor dependencies

- identify risks

- understand system evolution

Architecture analytics changes this.

By treating architecture as a model rather than a drawing, teams gain the ability to analyze how systems evolve and where complexity emerges.

This shift turns architecture diagrams into something far more valuable:

a lens for understanding the true structure of software systems.

Frequently Asked Questions

What is architecture complexity in software systems?

Architecture complexity refers to how difficult a software system is to understand, change, and maintain. It is driven by several factors: the number of components and services, the density of dependencies between them, the degree of coupling across domain boundaries, and the accumulation of deprecated or legacy technologies. A system can appear simple in a diagram while being architecturally complex beneath the surface — which is why visual impressions alone are not a reliable measure.

How do you measure software architecture complexity?

Software architecture complexity can be measured through a set of structural metrics applied to a model of the system. Key indicators include architecture size (total count of systems, containers, components, actors, and stores), diagram density (the ratio of relationships to elements in each diagram), dependency concentration (how many components depend on a single system or service), technology lifecycle distribution (the proportion of deprecated versus active technologies), and architecture growth rate (how many new elements are added over time). These metrics provide an objective, data-driven view of complexity.

Why are whiteboard diagrams not enough for managing architecture complexity?

Whiteboard diagrams rely on visual interpretation and cannot be queried, measured, or tracked over time. They show a snapshot of what engineers believe the system looks like at one moment, but they do not capture how many dependencies actually exist, which components are most heavily used, or how the architecture has grown over past months. Without measurable data, teams can only guess whether complexity is increasing — and they typically find out too late, when slowdowns, failures, or expensive refactoring make the problem impossible to ignore.

What is diagram density and why does it matter?

Diagram density is the number of relationships relative to the number of elements in a diagram. A high-density diagram contains many connections between a relatively small number of components. This often signals excessive coupling, unclear separation of responsibilities, or that a single diagram is trying to represent too many levels of abstraction at once. Monitoring density across diagrams helps teams identify where architectural boundaries need to be drawn more clearly.

What are architectural single points of failure and how can you detect them?

An architectural single point of failure is a component that so many other systems depend on that its failure would cascade through a large part of the architecture. These components are not always obvious from diagrams, but they become visible when you analyze dependency counts — specifically, which components appear most frequently as targets of relationships across the entire model. Architecture analytics tools that map dependency concentration can surface these patterns proactively, before a failure event reveals them in production.